Introduction

A $50,000 validation experiment shouldn't take 12 months. Yet for most enterprises, it does.

Compliance gates, legacy systems, overstretched teams, and multi-layered approval processes consistently kill momentum before a single line of production code ships. According to a McKinsey/Oxford study of 5,400 large IT projects, 17% become "black swans" — suffering budget overruns of 200% to 400%.

For enterprises, the MVP approach that works for startups often fails. "Minimum" means something entirely different when you factor in security mandates, regulatory compliance, and the need to integrate with mission-critical systems from day one. The challenge isn't just building quickly—it's building quickly while meeting enterprise-grade standards that regulated industries cannot defer.

This guide addresses how enterprises can validate new digital products without the financial and operational risks that plague traditional monolithic rollouts. It covers the real differences between startup and enterprise MVPs, the phased process required to move fast without breaking things, and how to decide when to scale, when to pivot, and when to cut your losses.

TLDR

- Enterprise MVPs require production-grade security, compliance, and integration from day one — not deferred to Phase 2

- The biggest risks are scope creep, internal resistance, and deferring compliance until rework becomes unavoidable

- Agile projects are 3X more likely to succeed than Waterfall approaches, yet large traditional projects succeed less than 10% of the time

- MVP timelines typically run 10–20 weeks, extending to 6–12 months in healthcare and finance due to compliance requirements

- A structured build process is essential — 70% of digital transformations fail without one

What Is Enterprise MVP Development (and Why "Minimum" Means Something Different)

An MVP is the earliest, leanest version of a product that includes only the features needed to test a core hypothesis with real users—without building everything upfront. The goal is validated learning: confirming or disproving key assumptions before committing to full-scale investment.

Why Enterprise Context Changes the Definition of "Minimum"

In enterprise environments, several elements are non-negotiable from day one — not optional extras to add later:

- Security authentication and access controls

- Audit logging and data governance

- Basic compliance measures relevant to the industry

- Integration with existing identity management or ERP systems

Forrester defines an MVP as the output requiring the least effort to build that delivers the best ability to learn about customer needs. The Scaled Agile Framework (SAFe) describes it as an early, minimal version of a new solution sufficient to prove or disprove an epic hypothesis. For mid-to-large B2B organisations, meeting that bar requires strict adherence to corporate governance, security baselines, and integration constraints.

Consider a financial services company testing a new customer onboarding flow. It cannot ship a rough prototype — from day one, it must address PCI compliance, integration with existing identity management systems, and enterprise security protocols. Skipping these elements doesn't just create technical debt; it creates regulatory risk that can halt the project entirely.

That distinction — between what's "minimum" in a startup and what's "minimum" in an enterprise — is also what separates an MVP from a proof of concept.

Enterprise MVP vs. Proof of Concept (PoC)

A PoC tests technical feasibility: Can we build this? It validates whether a specific technology or integration approach is viable. An MVP tests market or organisational viability: Should we build this, and will people use it? It validates whether users will actually adopt it and whether it justifies further investment.

In enterprise product development, the PoC typically precedes the MVP. Once technical viability is confirmed through a PoC, the MVP tests whether the solution justifies full investment.

The Innovation Paradox

Large organisations can fund almost any initiative — yet validating a new idea quickly is where they consistently fall short. Approval processes designed to protect large investments end up delaying small experiments. The result: innovation initiatives that should take weeks stretch into quarters, burning budget on extended planning cycles while more agile competitors move first.

Enterprise vs. Startup MVP: Key Differences

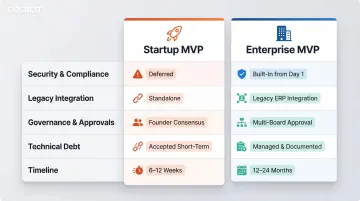

Startups can accept technical debt, skip compliance, and rebuild architecture later. Enterprises cannot. Every MVP must be built on foundations that are auditable, secure, and extensible.

Key Constraint Differences

| Constraint Category | Startup MVP Approach | Enterprise MVP Requirement |

|---|---|---|

| Security & Compliance | Deferred to post-launch; basic SSL | Built-in from Day 1 (HIPAA, PCI DSS, ISO 27001) |

| Legacy Integration | Standalone application; modern APIs | Must integrate securely with legacy ERPs and IAM systems |

| Governance & Approvals | Founder/team consensus | Architecture Review Boards, InfoSec, Legal, Procurement |

| Technical Debt | Accepted for speed-to-market | Strictly managed; regulators penalize unpatched vulnerabilities |

| Timeline | 4–8 weeks | 10–20 weeks (6–12 months in regulated sectors) |

Timeline and Governance Overhead

Consumer startups ship MVPs in weeks. Enterprise builds typically run 4–8 months — and stretch to 6–12 months in healthcare and pharma. Complex procurement cycles (2–6 months) and multi-stage Architecture Review Boards drive these timelines, not engineering complexity alone.

The Non-Viability of Technical Debt

Regulatory bodies are explicit on this point. For enterprises operating in finance, healthcare, or government sectors:

- The OCC warns that postponing system updates or delaying architecture upgrades creates unwarranted risks

- The FFIEC Information Security Booklet mandates timely patch review and installation procedures

- Regulators penalize unpatched vulnerabilities — not just breaches

Deferring security for speed isn't a trade-off. In regulated environments, it's a compliance failure.

Different Success Metrics

A startup MVP succeeds when users engage with it. An enterprise MVP must clear a broader bar: user adoption, operational feasibility, security compliance, and integration reliability — all at once. The validation criteria reflect a higher level of organizational risk, not just product ambition.

Why Enterprises Need MVPs: Key Benefits

Risk Reduction Through Validation

Committing to full product development without a validated hypothesis puts multi-million-dollar budgets at risk. McKinsey's analysis shows that large IT projects (over $15 million) run 45% over budget and deliver 56% less value than predicted. An MVP lets enterprises confirm market demand or internal adoption potential before major investment, directly addressing the root causes of project failure.

Faster Time-to-Market and Competitive Response

The data on development methodology is hard to ignore. Agile projects are 3X more likely to succeed than Waterfall projects, while large traditional projects succeed less than 10% of the time.

Iterative, MVP-driven development reduces financial risk compared to monolithic rollouts — letting enterprises respond to market shifts and competitive threats before a full build is even complete.

Smarter Internal Resource Allocation

Enterprise development teams carry the weight of maintaining existing revenue-generating systems. An MVP framework lets innovation run in parallel — without pulling those teams away from mission-critical operations.

Partnering with specialized external teams addresses this directly. A full-spectrum technology partner covering UI/UX, development, data engineering, and AI integration can validate new concepts without overburdening core staff. This keeps internal resources focused where they generate the most value.

Common Challenges in Enterprise MVP Development

Stakeholder Alignment and Internal Resistance

Large organizations have multiple layers of decision-makers, each with different risk tolerances and success criteria. According to the 17th State of Agile Report, 47% of organizations cite general organizational resistance to change or "culture clash" as a primary barrier to Agile adoption, while 41% point to a lack of leadership participation.

Misaligned expectations between product, IT, compliance, and business units are one of the top reasons enterprise MVPs stall before they launch. Without explicit agreement on what success looks like and which assumptions are being tested, projects drift into scope creep or political gridlock.

Legacy System Integration Complexity

Most enterprise MVPs don't exist in isolation—they must connect to existing ERP systems, identity providers, CRMs, or data pipelines from day one. This integration overhead is often underestimated and inflates both timeline and cost if not scoped carefully from the start.

Legacy systems frequently use outdated protocols, lack modern APIs, and require custom middleware. Each integration point introduces technical complexity, security considerations, and potential failure modes that must be addressed during initial development.

Compliance and Governance Overhead

Regulated industries (finance, healthcare, insurance) cannot defer security, audit logging, or data privacy controls to a later phase. Treating compliance as a Phase 2 problem means expensive rework, delayed launches, or both.

For example, achieving HIPAA compliance can take 1 to 2 years for medium-sized organizations and cost between $60,000 and $90,000. Enterprises that fail to integrate security early face failed audits, regulatory penalties, and the need to rebuild foundational architecture.

Scope Creep Disguised as "Enterprise Requirements"

There's a critical distinction between genuine enterprise-grade non-negotiables and requirements that stakeholders label as "minimum" but are actually nice-to-have features. Research reveals that 80% of software features are rarely or never used, costing publicly traded cloud companies up to $22 billion in missed value.

Enterprises need to distinguish what validates the core hypothesis from what is politically motivated or comfort-driven. A useful filter:

- Does this feature test a specific assumption about user behavior or business value?

- Would the MVP fail to generate meaningful data without it?

- Is it required by regulation, or just expected by internal stakeholders?

If the answer to all three is no, it doesn't belong in the MVP.

Measuring the Right Success Metrics

Enterprise MVPs often get evaluated on the wrong criteria—feature completeness or internal satisfaction scores—rather than whether the core hypothesis was validated. Without explicit decision criteria defined before a single line of code is written, validation data becomes subjective and political rather than actionable.

Step-by-Step Enterprise MVP Development Process

This process is iterative, not linear. The goal at each stage is to generate actionable insight, not just ship code. Codiot's delivery model — spanning UI/UX, development, data engineering, and AI integration — is built to support enterprises through each of these stages without overburdening internal teams.

Define the Problem and Validate the Hypothesis

Start by articulating the specific business or user problem the MVP is meant to solve. Define what success looks like in measurable terms, and identify which assumptions are being tested and what evidence would confirm or refute them.

Example criteria:

- "We assume that 60% of internal users will complete the new onboarding flow within 5 minutes"

- "We hypothesize that automating this approval step will reduce processing time by 40%"

- "We believe that integration with our existing CRM will increase data accuracy by at least 25%"

Without this clarity upfront, the MVP becomes a moving target driven by stakeholder opinions rather than evidence.

Identify and Prioritize Core Features

Use a prioritization framework to identify the minimum feature set that directly tests the core hypothesis. The MoSCoW technique (Must have, Should have, Could have, Won't have) helps identify the minimum usable feature set, protecting the MVP from feature bloat.

Must Have (Baseline Enterprise Requirements):

- Security authentication and role-based access control

- Audit logging for compliance

- Integration with existing identity management

- Data encryption at rest and in transit

- Core features that directly test the hypothesis

Should Have (Valuable but Deferrable):

- Advanced reporting dashboards

- Additional user roles beyond core personas

- Enhanced notification systems

Could Have (Nice-to-Have):

- Customisable UI themes

- Advanced analytics

- Third-party integrations beyond core systems

Won't Have (Explicitly Deferred):

- Features that don't contribute to validating the hypothesis

- Enhancements driven by stakeholder preference rather than validation needs

Anything that doesn't contribute to validating the hypothesis gets deferred to post-MVP phases.

Build for Enterprise Baseline from Day One

While non-core features get deferred, some requirements cannot be treated as optional. Security architecture, audit logging, and integration interfaces must be built into the initial development — not patched in later. These are not "advanced features"; they are the enterprise's definition of a working product.

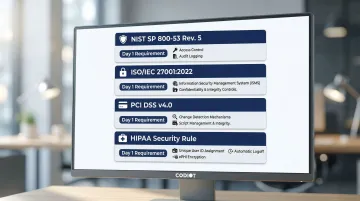

Regulatory baselines vary by sector, but the implementation requirements are non-negotiable from Day 1:

| Regulatory Standard | Key MVP Implementation Requirements |

|---|---|

| NIST SP 800-53 Rev. 5 | Implement strict Access Control (AC) and Audit/Accountability (AU) logging, including cryptographic protection of audit tools |

| ISO/IEC 27001:2022 | Establish an Information Security Management System (ISMS) ensuring data confidentiality, integrity, and availability |

| PCI DSS v4.0 | Deploy change-and-tamper-detection mechanisms and manage all payment page scripts |

| HIPAA Security Rule | Enforce unique user identification, automatic logoff, and encryption of ePHI at rest and in transit |

Enterprises in regulated sectors must treat these as Day 1 requirements, not Phase 2 enhancements.

Run a Controlled Rollout and Collect Structured Feedback

Describe a phased release approach: start with a limited internal or beta user group, define feedback collection mechanisms upfront (usage analytics, surveys, structured interviews), and set a clear checkpoint timeline (e.g., week 12–16) for evaluating initial validation data.

Phased rollout structure:

- Internal pilot (weeks 8–10) — Deploy to 10–20 internal users representing core personas

- Structured feedback collection — Implement analytics tracking, user surveys, and scheduled interviews

- Validation checkpoint (weeks 12–16) — Evaluate results against pre-defined success criteria

- Controlled expansion (if validated) — Expand to broader user base or additional departments

The controlled rollout prevents organisation-wide disruption while generating the data needed to make informed decisions.

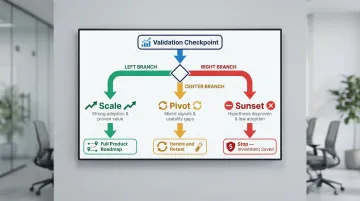

Analyse, Decide, and Iterate

At the validation checkpoint, evaluate results against pre-defined success criteria. Make an explicit decision: scale the product, pivot the approach, or sunset the initiative.

A decision to stop is not a failure—it is the MVP working as intended by saving investment in a wrong direction. The financial impact of halting a flawed initiative post-MVP saves millions in downstream development and maintenance. Ongoing maintenance and support typically consume 15% to 25% of the initial development cost annually. Sunsetting an unvalidated MVP before that clock starts ticking is, in itself, a measurable return on the investment made.

From MVP to Full Product: Scale, Pivot, or Sunset

Establish Decision Criteria Before Development Begins

Define what usage patterns, adoption thresholds, or business metrics would justify full-scale investment. Without these criteria set in advance, validation data is subjective and political rather than actionable.

Example decision criteria:

- Scale: 70%+ user adoption rate, 40%+ reduction in processing time, positive ROI projection within 18 months

- Pivot: 40–60% adoption, positive user feedback but significant usability concerns, clear path to improvement

- Sunset: <30% adoption, negative user feedback, technical feasibility concerns, or hypothesis disproven

The Three Post-MVP Paths

| Path | When It Applies | Next Step |

|---|---|---|

| Scale | Strong adoption, proven business value, technical viability confirmed | Move to full product roadmap |

| Pivot | Positive signals but usability gaps, unexpected user behaviours, or wrong target segment | Iterate on concept before committing to full build |

| Sunset | Hypothesis disproven, low adoption, negative feedback | Stop — the MVP saved the organization from a costly mistake |

Each path is a valid outcome. The MVP's job is to surface that answer faster and cheaper than a full build ever could.

Address Institutional Knowledge Continuity

Whether the MVP was built internally or externally, plan for structured knowledge transfer—architecture documentation, codebase walkthroughs, and decision logs—so the full-product team inherits a solid foundation rather than a black box.

Essential knowledge transfer elements:

- Architecture diagrams and system design documentation

- Codebase walkthroughs with technical team

- Decision logs explaining key architectural and feature choices

- Integration specifications and API documentation

- Security and compliance implementation details

- User research findings and validation data

Without this handoff, teams rebuilding context from scratch introduce delays, duplicate decisions, and avoidable technical debt.

Frequently Asked Questions

What is MVP development in business?

MVP (Minimum Viable Product) development is the process of building the leanest version of a product that tests a core business hypothesis with real users, avoiding the risk of full-scale investment before validating demand or feasibility.

What is the difference between MVP, MMP, and MLP?

An MVP (Minimum Viable Product) is built purely to test a hypothesis. An MMP (Minimum Marketable Product) includes just enough features for a commercial launch. An MLP (Minimum Lovable Product) focuses on delivering a delightful early user experience.

In enterprise product development, which comes first: PoC or MVP?

A Proof of Concept (PoC) typically comes first—it validates whether the technology or integration is technically feasible. Once technical viability is confirmed, the MVP tests whether the product concept delivers real business value and user adoption.

How long does enterprise MVP development typically take?

Timelines vary by complexity, integration needs, and compliance requirements, but enterprise MVPs typically take 10–20 weeks. Enterprises building with newly formed internal teams often take significantly longer due to onboarding and governance overhead. In regulated industries like healthcare and finance, however, compliance requirements can push timelines to 6–12 months.

What features should be included in an enterprise MVP?

An enterprise MVP should include core features that directly test the business hypothesis, plus non-negotiable enterprise baselines: security authentication, data encryption, audit logging, and the minimum integrations required for the product to function in its real environment.

How much does enterprise MVP development cost?

Costs vary based on feature scope, integration complexity, compliance needs, and team composition. According to 2026 pricing benchmarks, high-complexity enterprise systems with advanced security and compliance (HIPAA, SOC 2) range from $225,000 to $750,000+. Compliance requirements can add $20,000–$55,000, and legacy integration adds $15,000–$37,000 per system.