Introduction

According to CB Insights' analysis of 431 failed VC-backed startups, 43% collapsed due to poor product-market fit. Another 70% ran out of capital — but that was usually the final symptom, not the root cause. The real problem? Building a product no one wanted.

An MVP — Minimum Viable Product — is the simplest working version of your product that delivers core value to real users. The goal is validated learning before you commit significant resources. Building lean isn't a budget compromise; it's how you test your riskiest assumptions with minimal waste.

This guide walks through how to choose the right MVP type, the development process from concept to launch, and how to prioritize features so you reach the market faster without burning through your runway.

TLDR

- An MVP tests your riskiest assumptions with minimal investment—not a cheap version of your full product

- Pick your MVP type—Concierge, Landing Page, Wizard of Oz, or Functional—based on what assumption you need to validate first

- Feature prioritization is the most common bottleneck—use MoSCoW and the 80/20 rule to cut ruthlessly

- Outsourcing to an experienced partner like Codiot can cut time-to-market by up to 25%

Choosing the Right MVP Type for Your Startup

Not all MVPs are built the same way. The right type depends on what assumption you're testing—demand, usability, or technical feasibility.

Concierge MVP

This type delivers the service manually before any automation is built. It's ideal for validating demand and user workflows in service-based startups without writing a single line of code.

Real-world example: In 1999, Zappos founder Nick Swinmurn photographed shoes at local stores and manually purchased and shipped them when orders came in. This unscalable approach validated that people would buy shoes online without trying them on first—before investing millions in infrastructure.

Landing Page MVP

A marketing page, waitlist, or sign-up form used to measure interest in an idea before building it. Good for very early-stage ideas when you need to validate demand quickly.

Real-world example: Before building Buffer's social media scheduling tool, Joel Gascoigne created a two-page website with pricing tiers ($0, $5, $20). Users who clicked a paid plan were asked for their email. Once he saw users actively selecting paid tiers, he spent 7 weeks coding the actual product.

Wizard of Oz MVP

The product appears automated to users but is driven by manual processes behind the scenes. Used to validate complex user behavior without building the full system—particularly useful for AI-style features or matching algorithms.

Real-world example: Early Airbnb hosts manually photographed listings and wrote descriptions themselves rather than relying on automated tools. The founding team personally visited hosts in New York to stage apartments and take professional photos—confirming that quality presentation drove bookings before building any automated photography or onboarding systems.

Functional (Single-Feature) MVP

A working product with one core feature that real users can actually interact with. Best when you need real usage data and behavioral feedback, not just demand signals.

Real-world example: Instagram launched in October 2010 as a photo-filter-only app. Kevin Systrom and Mike Krieger stripped away all features from their previous app Burbn—including check-ins, messaging, and video—to focus exclusively on photos, comments, likes, and filters. This hyper-focused MVP acquired 100,000 users in less than a week and reached 1 million users in under three months.

Decision Framework

Choose your MVP type based on three factors:

- What assumption needs testing - Demand? Usability? Technical feasibility?

- Available budget and timeline - How much can you invest before validation?

- Data requirements - Do you need real user interaction data or just demand signals?

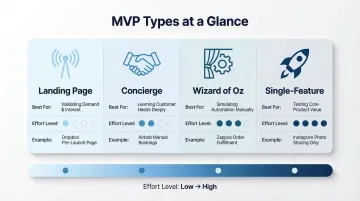

| MVP Type | Best For | Effort Level | Example |

|---|---|---|---|

| Landing Page | Problem resonance and willingness to pay | Very Low (Days) | Buffer (2010) |

| Concierge/Wizard of Oz | Solution value and user experience | Low Tech, High Manual Labor | Zappos (1999) |

| Functional (Single-Feature) | Core product utility and engagement | Medium (Weeks) | Instagram (2010) |

The Step-by-Step MVP Development Process

A structured process prevents over-engineering and keeps your team aligned on validated learning rather than feature completion.

Step 1 – Define Goals and Target Audience

Every MVP starts with three critical elements:

- Clear problem statement - What specific pain point are you solving?

- Defined target user persona - Who experiences this problem most acutely?

- Specific success metrics - Number of sign-ups, activation rate, retention percentage

Miss any of these and you'll burn sprint cycles solving a problem nobody validated. Success metrics should be measurable within 4–12 weeks of launch.

Step 2 – Identify and Prioritize Core Features

This step forces you to list every possible feature and then aggressively cut it down to only what directly validates your core value proposition.

The output should be a lean feature list—not a product roadmap. Ask yourself: "Which single feature, if removed, would make the product useless?" Build only that.

That discipline matters more than most teams realize. Research shows 80% of features in the average software product are rarely or never used, representing up to $29.5 billion in wasted R&D across the cloud software industry.

Step 3 – Design a Prototype

Once your feature list is locked, wireframes and low-fidelity prototypes expose UX issues cheaply, align stakeholders, and prevent costly redesigns mid-development.

The cost of fixing defects grows exponentially through the development lifecycle. Research from the IBM Systems Science Institute shows that fixing a defect post-launch costs up to 100x more than fixing it during the design phase.

Tools like Figma enable rapid wireframing and stakeholder feedback before writing a single line of code.

Step 4 – Build with Agile Sprints

With a validated prototype in hand, short development cycles (1–2 week sprints) let you test and adjust in real time. This contrasts sharply with waterfall approaches, where feedback arrives too late—and too costly—to act on.

Benefits of sprint-based development:

- Forces scope reduction and ruthless prioritization

- Enables continuous stakeholder feedback

- Identifies technical blockers early

- Maintains team momentum and morale

Elite software teams deploy code 46 times more frequently than low performers while maintaining a 7x lower change failure rate.

Step 5 – Launch, Measure, and Iterate

The post-launch phase is where validated learning happens:

- Release to a small group of early adopters - 50-200 users is sufficient for initial feedback

- Track key metrics - Activation rate, churn, feature adoption, time-to-value

- Collect qualitative feedback - User interviews, surveys, support tickets

- Decide next steps - Iterate, pivot, or scale based on both quantitative and qualitative data

Skipping this structure is how startups confuse "launched" with "validated." The Build-Measure-Learn cycle is what turns early-user data into a decision — iterate, pivot, or scale with confidence.

Feature Prioritization: What to Build First (and What to Skip)

Feature prioritization is the highest-leverage skill in MVP development. Getting this wrong is the fastest way to overspend and delay launch.

The MoSCoW Method

This framework categorizes features into four buckets:

- Must-Have — The product fails to deliver core value without these; no workarounds exist

- Should-Have — Valuable additions, but users can operate without them at launch

- Could-Have — Desirable if time allows, but skipping them won't hurt adoption

- Won't-Have — Deliberately out of scope; revisit after you've validated the core

An MVP should contain only Must-Haves. If you're building Should-Haves into your MVP, you're over-engineering.

Example for a SaaS project management tool:

Must-Have:

- Create and assign tasks

- Set due dates

- Mark tasks complete

Should-Have:

- Task comments and attachments

- Email notifications

- Task templates

- Basic search and filter

Could-Have:

- Time tracking

- Gantt charts

- Custom fields

Won't-Have:

- Resource management

- Advanced reporting

The 80/20 Rule Applied to MVP Features

MoSCoW gives you a framework for categorizing features — the Pareto Principle tells you where to focus your energy within that framework. The 80/20 rule holds that 20% of features deliver 80% of the value users actually care about.

To identify that critical 20%, ask: "Which single feature, if removed, would make the product useless?" That answer defines your build scope. Everything else is a candidate for deferral.

The Standish Group's CHAOS research found that 64% to 80% of custom features deliver low to no value. Don't contribute to this waste.

User Journey Mapping for Feature Cuts

Map the most critical user journey—from sign-up to first moment of value. This reveals which features are truly necessary and which are peripheral.

Example journey for a meal planning app:

- Sign up with email

- Answer 3 preference questions

- View first week's meal plan

- Save one recipe

Anything that doesn't sit on this critical path can be deferred to post-MVP iterations. Recipe sharing, grocery list exports, and nutritional tracking are all "nice-to-haves" that distract from validating the core value proposition.

Build in Learning Features

Once you've trimmed your feature set to the critical path, you need tools to measure whether that path is actually working. Analytics and feedback mechanisms often deliver more value in an MVP than extra product features — without them, you're iterating blind.

Essential learning features:

- Basic event tracking (sign-ups, feature usage, drop-off points)

- In-app feedback widget or survey

- Session recording for UX analysis

- Simple A/B testing capability

These are commonly overlooked MVP requirements that dramatically accelerate validated learning.

Common MVP Mistakes Startups Make (and How to Avoid Them)

Overbuilding the MVP

The most frequent mistake is treating the MVP as a "lite version of the full product" rather than a hypothesis-testing tool. This leads to unnecessary scope creep, delayed launches, and wasted capital.

An MVP exists to test assumptions — not to impress investors or match established players feature-for-feature.

Ignoring Real User Feedback

Some startups launch but never close the feedback loop. An MVP without a structured feedback mechanism is just an unfinished product.

The Build-Measure-Learn cycle is the corrective framework here. Every launch should trigger structured measurement — tracking key metrics, running qualitative user interviews, and identifying what to adjust before the next iteration.

Choosing the Wrong Technology Stack

Selecting overly complex or non-scalable technologies early on slows iteration speed and inflates cost.

The right stack for an MVP prioritizes:

- Speed of development

- Flexibility for pivots

- Availability of developers

- Proven reliability

Scalability becomes a priority once you've confirmed real demand — not before. Lock in validation first, then architect for growth.

Build In-House or Outsource Your MVP?

The In-House Challenge

In-house development gives founders full control and deep product knowledge but requires hiring skilled developers, designers, and product managers.

According to the US Bureau of Labor Statistics, the median annual wage for a software developer is $133,080. UX designers command a median total pay of $108,294. Recruiting, onboarding, and paying a full cross-functional team can consume your entire pre-seed budget before writing a single line of production code.

When Outsourcing Makes Sense

A specialized MVP development partner brings pre-built processes, cross-functional expertise (design, development, QA), and domain experience from multiple product launches. The result: faster launches with fewer coordination bottlenecks.

Key advantages:

- Offshore and nearshore engineers typically cost 40–70% less than US or Western European hires

- Outsourcing can cut time-to-market by up to 25%

- Access to full-stack teams without lengthy recruiting

- Reduced technical debt through experienced architecture decisions

Codiot, for instance, handles end-to-end MVP builds spanning UI/UX design, web, and mobile development, with AI integration built into the process — useful when you need speed without accumulating technical debt.

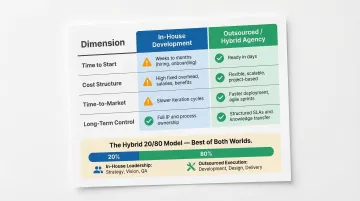

The Hybrid Approach

Many startups outsource the MVP build while gradually building an internal team as traction grows. You move fast early without locking yourself into high fixed costs before product-market fit.

The 20/80 model:

- 20% in-house leadership (technical founder or product owner)

- 80% outsourced execution team

- Clear handoff plan as the product scales toward a full internal team

This approach can launch MVPs 20–30% faster than hiring fully in-house, while maintaining strategic control and product vision.

| Dimension | In-House Development | Outsourced/Hybrid Agency |

|---|---|---|

| Time to Start | 30–45 days (recruiting & onboarding) | 2–4 weeks (ready-to-deploy teams) |

| Cost Structure | High fixed costs (salaries, benefits, equity) | Variable, milestone-based spend |

| Time-to-Market | Slower (team formation required) | Up to 25% faster |

| Long-Term Control | High control, deep product knowledge | Requires deliberate transition plan |

Frequently Asked Questions

What is an MVP for startups?

An MVP is the simplest working product version used to test core assumptions with real users. Startups use it to validate demand and gather feedback before committing full resources to development, reducing the risk of building products nobody wants.

What is the 80/20 rule for startups?

The Pareto Principle states that roughly 20% of features drive 80% of user value. The goal is to identify and build only that critical 20% in their MVP to avoid overbuilding and wasting resources on features users won't use.

What comes first, PoC or MVP?

A Proof of Concept (PoC) tests whether an idea is technically feasible (internal validation), while an MVP tests whether real users want the solution (external validation). PoC typically precedes MVP when the underlying technology itself is unproven.

How long does it take to build an MVP for a startup?

Timeline depends on complexity and MVP type. Low-fidelity MVPs (landing pages, prototypes) can be done in 2–4 weeks, while functional app MVPs typically take 6–14 weeks using agile development. Industry data shows 93% of development companies can set up an MVP in 3 to 12 weeks.

How much does it cost to build an MVP?

MVP costs typically range from ₹8,00,000 to ₹1,20,00,000+ depending on feature complexity, tech stack, design requirements, and whether you build in-house or partner with a development company. Simple MVPs start around ₹4,00,000, while AI-heavy builds sit at the higher end of that range.

Should I build my MVP in-house or outsource it?

In-house development offers control but requires time and hiring. Outsourcing is faster and brings expertise, provided you choose a partner with proven MVP delivery experience. Many startups outsource the MVP to validate the market first, then build an internal team once traction is proven.